What is it about?

The mammalian visual cortex exhibits significant neuronal activation in response to signals associated with self-generated movements. This study investigates the computational significance of such signals for visual perception, demonstrating that they may act to stabilize perception during eye movements.

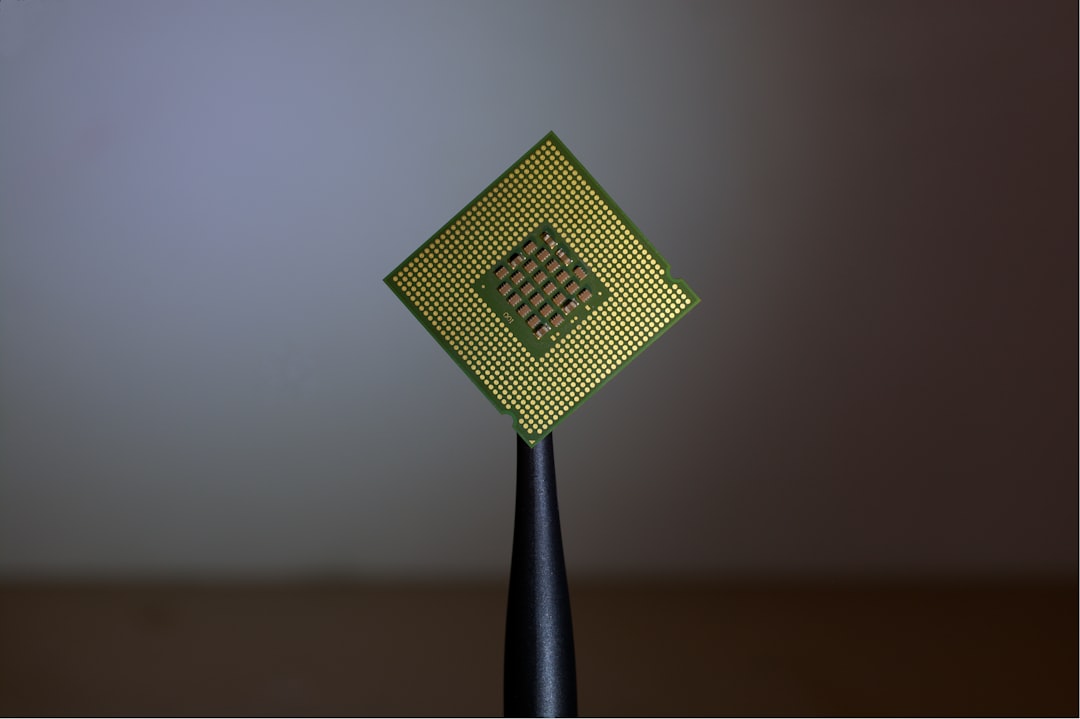

Featured Image

Photo by Marina Vitale on Unsplash

Why is it important?

Why are signals related to self-generated movements activating neurons in visual cortical areas? Utilizing CNNs as simplified mechanistic models of the "hybridized" ventral and dorsal visual streams, we showed these models can use movement signals, co-propagating with visual inputs, to robustly recognize objects--a key feature in visual stability, together with the suppression of motion perception (not examined here and to be addressed in future work with dynamical models).

Perspectives

Without an operational definition of visual perception, it is difficult to study its stability. Therefore, previous studies have focused on algorithms that may be related, such as the transformation between spatial reference frames. Here, we used an operational definition of visual perception as the activity states of networks trained to classify features in the visual world. By using CNNs as (static) mechanistic models, we demonstrated a pivotal feature of visual stability: self-generated movement signals co-propagating with visual inputs, can support the classification performance of the models. Furthermore, this framework could be used to explain other unique visual phenomena, such as those related to the perception of phosphenes and the higher discrimination power of network components compared to behavioral output.

Andrea Benucci

Read the Original

This page is a summary of: Motor-related signals support localization invariance for stable visual perception, PLoS Computational Biology, March 2022, PLOS,

DOI: 10.1371/journal.pcbi.1009928.

You can read the full text:

Resources

Contributors

The following have contributed to this page