What is it about?

A point cloud, as the name suggests, is a collection, or cloud, of many points in 2D or 3D space. Point clouds are the output of most 3D image scanning processes. They are the base for many 3D models, metrology, and countless computer graphics applications. For graphics and computer vision applications, many features of point clouds are hand designed. Designing them can be cumbersome and requires expert knowledge. An alternative is to transform the point cloud into a mesh grid. But this often leads to loss of accuracy or is computationally expensive. The power of convolutional neural networks (CNNs) to analyze images leads to the question: can we incorporate CNNs to model point clouds? Scientists suggest a new neural network module called "EdgeConv." EdgeConv performs CNN based high level tasks on point clouds, like classification and segmentation. The CNN based method is adaptable and easily added into existing CNN architectures. Indeed, EdgeConv shows many advantages over existing methods. Extensive testing shows that it achieves state of the art performance on benchmark datasets.

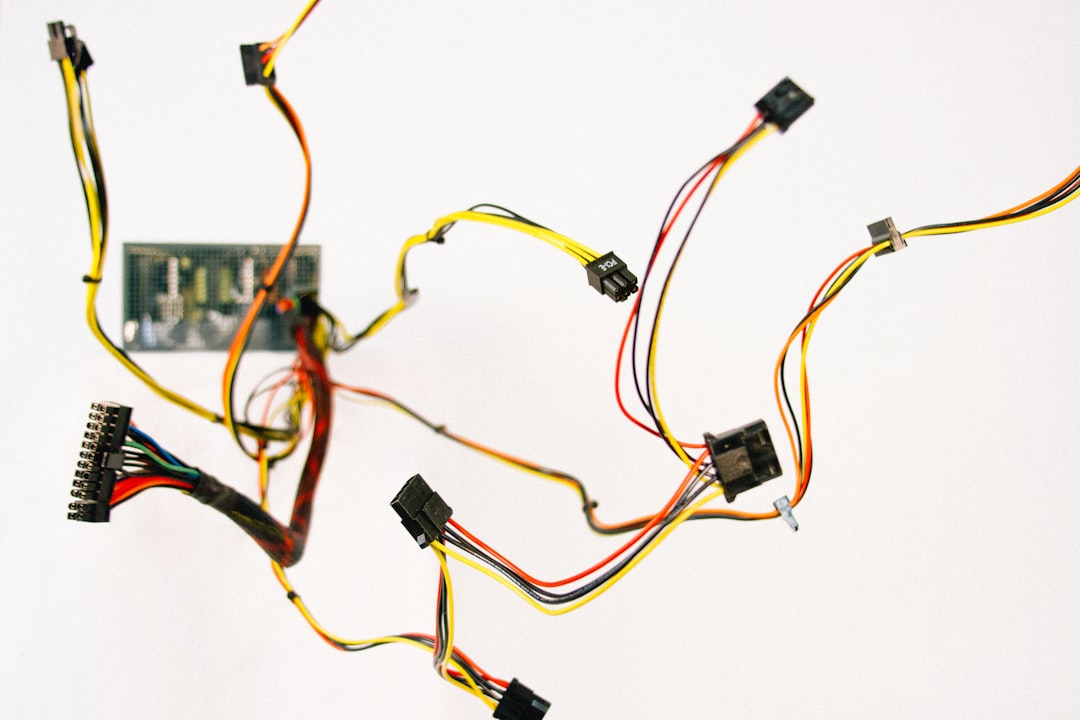

Featured Image

Photo by DeepMind on Unsplash

Why is it important?

Point clouds, or scattered collections of points in 2D or 3D, are the simplest shape representation. Many 3D sensing technologies, like LiDAR scanners and stereo reconstruction, output point clouds. There are a growing number of applications for point cloud analysis. Exciting technologies like self driving vehicles and robotics depend on accurate point cloud geometries. The accuracy of point clouds is critical to shape synthesis, object manufacturing, and modelling. KEY TAKEAWAY: By adding CNN ideas and methods to point clouds, better point cloud geometries can be designed. EdgeConv can perform high level tasks on point clouds. It outperforms traditional methods and is the first step in the future of point clouds.

Read the Original

This page is a summary of: Dynamic Graph CNN for Learning on Point Clouds, ACM Transactions on Graphics, November 2019, ACM (Association for Computing Machinery),

DOI: 10.1145/3326362.

You can read the full text:

Contributors

Be the first to contribute to this page